Olga Mykhoparkina

Founder, CEO

Olga Mykhoparkina

Apr 30, 2026Something has shifted in how SaaS buyers find tools. A growing number skip Google entirely and go straight to ChatGPT, Claude, or Perplexity with a question like “what’s the best project management tool for a remote team?” and act on whatever comes back.

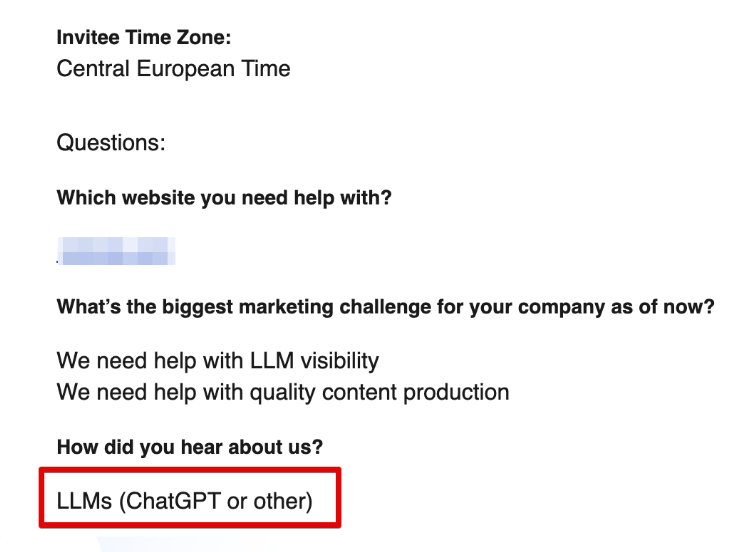

We have proof of this in our own data. New leads who book a call with us increasingly say they found us through ChatGPT or another AI tool. For a company that helps SaaS brands get cited by AI, there’s no stronger signal that this channel is real and converting.

At Quoleady, we research LLM visibility from a SaaS B2B angle. We focus specifically on high-intent prompts, the kind buyers use when they’re actively comparing options or looking for alternatives to tools they already use. That hands-on research is what shapes how we help our clients get cited in those results.

This article is everything we’ve learned. It’s built on our own research and data from thousands of AI citations, combined with direct input from practitioners: SEO specialists, content strategists, agency founders, and GEO consultants, who shared what’s actually working in their client work right now, what they’ve tested, and what they’ve stopped doing.

I said it before: “It’s rare to find a marketing effort that can compete with listicles on ROI.”

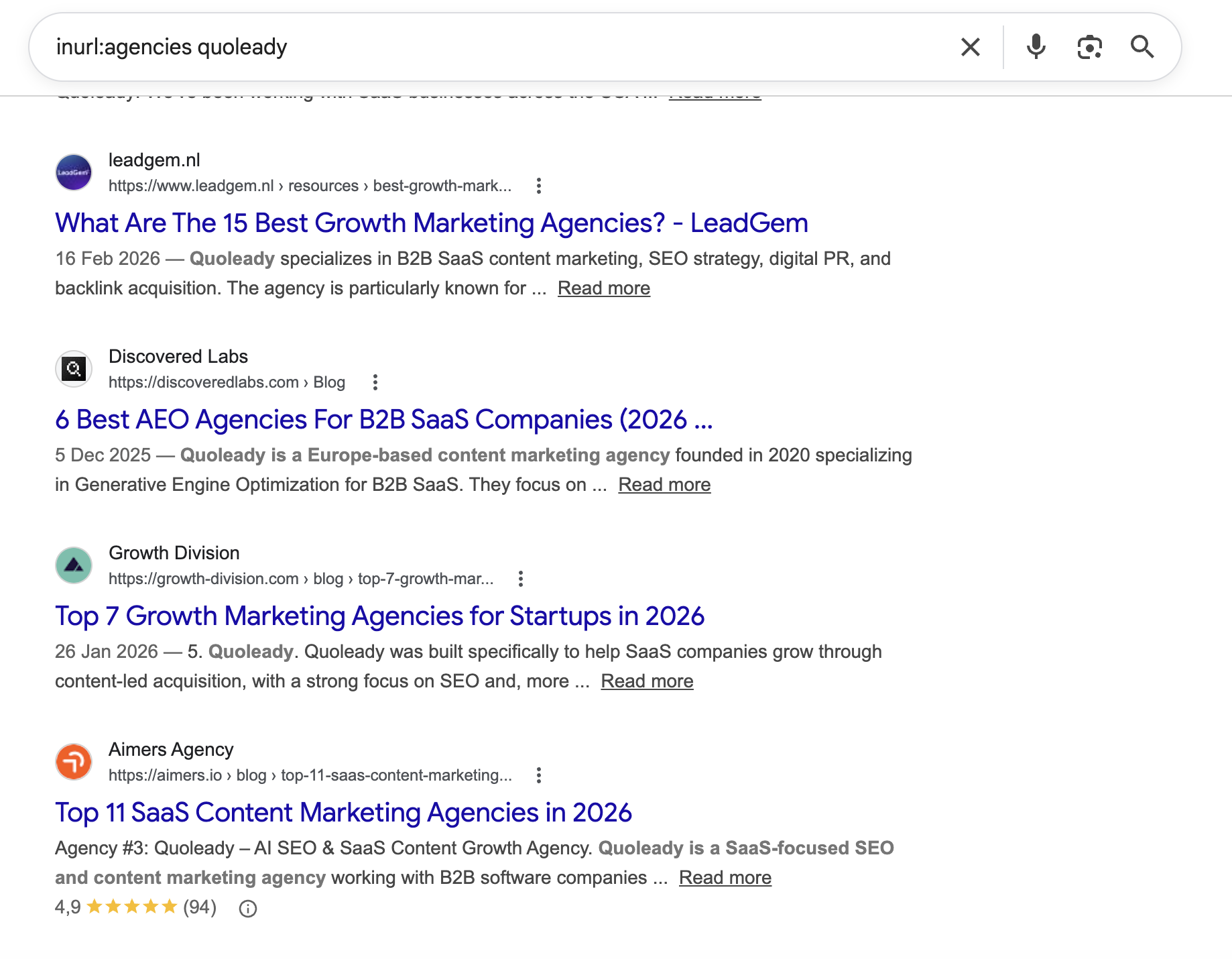

Quoleady is featured in 90+ listicles across the internet for B2B SaaS content marketing. Getting featured in those listicles is what built our AI visibility, and it’s the same work we do for our clients. From our own data, we’ve found a strong correlation between appearing in top-ranking listicles and showing up in high-intent AI-powered answers.

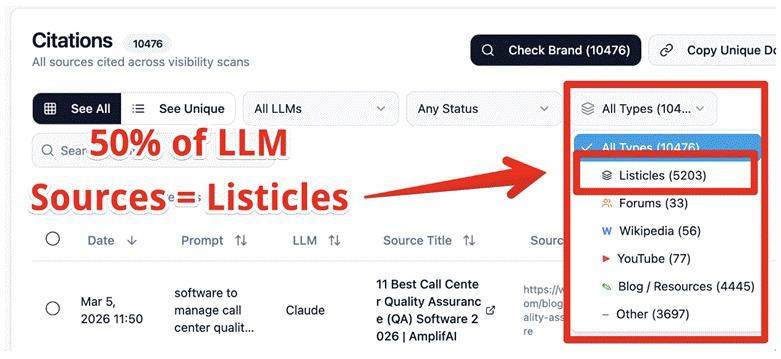

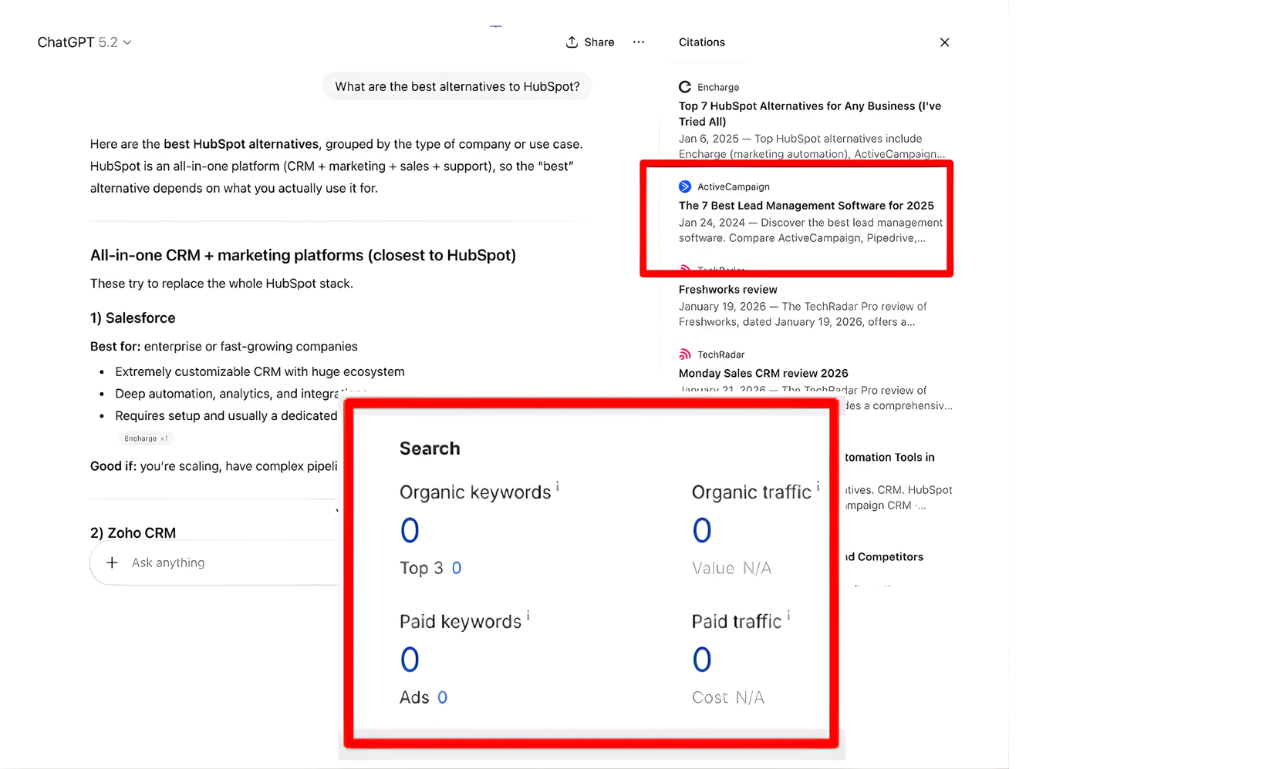

Top-ranking listicles are the dominant citation source across AI tools. Our LLM citations study of 10,000 citations found that they account for 50% of all citation sources.

So if you’re a SaaS company or agency looking to get cited by AI, you need to publish listicles on your own website and get featured on third-party sites through outreach. The more listicles you appear in, the better your LLM visibility.

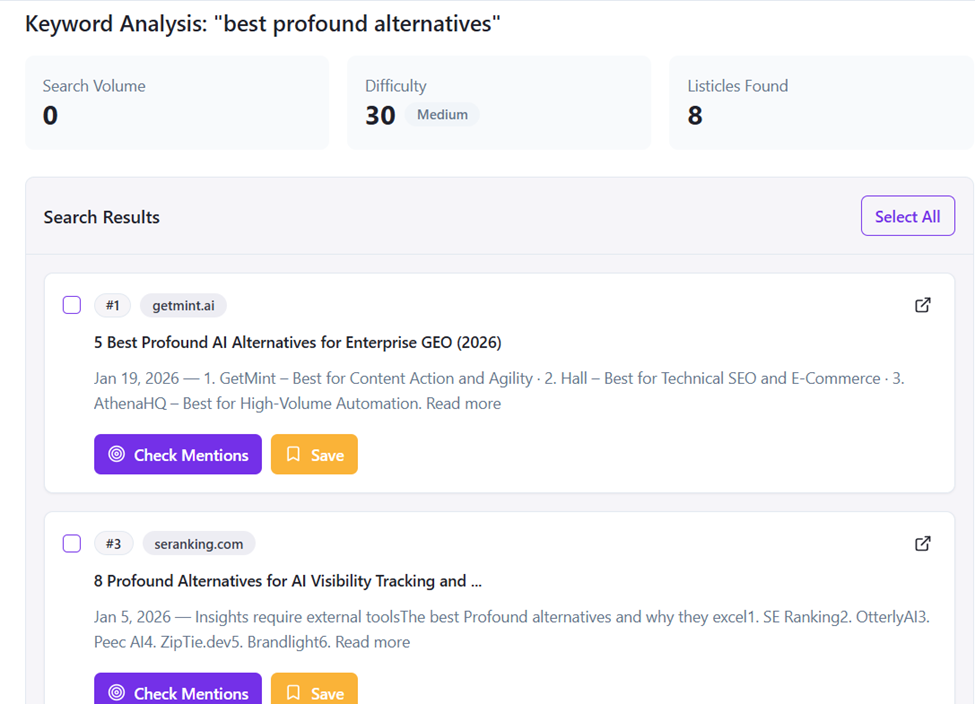

The goal is to target top-ranking listicles for your core business keywords, like: “tools”, “software”, “alternatives”. Headlines like: “Top 11 LLM Visibility Tools in 2026” and “Best Profound Alternatives for Agencies” are exactly the format you’re after.

But why does this matter so much?

In SaaS, potential customers rarely make quick decisions. Before committing, they research thoroughly and compare options. They look for brands that understand their problem and feel equipped to solve it.

Listicles meet that need perfectly. They offer structure, a clear breakdown of options, and obvious next steps, making them one of the most effective formats for reaching high-intent buyers. These are the articles people read right before making a purchase decision.

Sreeram Sharma, SEO Consultant at Angleout, puts it well: “I mainly work with SaaS websites, and whenever we begin an SEO campaign, the very first thing I suggest is to publish more BOFU and MOFU content. This primarily includes listicles, alternatives, and comparison guides. We have also checked it on Google Analytics, and these are the pages that have brought us the most traffic from AI overviews, apart from the homepage.”

If you want practical tips on how to write listicles that get cited by AI, this article is worth a read.

Listicles may be the top format for AI citations, but they’re also hard to write well. A 10-item listicle is essentially 10 mini-articles in one. Each entry needs its own description, use case, features, pros, cons, and pricing. It takes real time and research to get right.

Most listicles online skip that work. They rehash the same points, rely on keyword stuffing, and hope nobody notices. The ones that actually get cited are built on real experience: tested tools, honest opinions, and specific details that readers can’t find anywhere else.

We stand by this at Quoleady. Our Director of Operations, Iryna Kutnyak, puts it this way: “A key part of the process was actually testing each competing tool ourselves rather than compiling information from existing articles. We created accounts, set up campaigns, explored features, and documented differences between products. This allowed us to include concrete details and real comparisons instead of repeating the same information already circulating online.”

She also noted that publishing large volumes of generic, AI-optimized content tends to underperform: “Listicles based on real product testing are much more likely to appear in AI answers than articles that simply compile information from secondary sources.”

Ugljesa Djuric, Founder and CEO at ContentMonk, echoes this: “Write deep, honest listicles and comparison articles. Cover the real questions buyers have. Include genuine comparisons and opinions. Do it well, and AI doesn’t just cite you—it starts forming opinions about your entire category based on your content.”

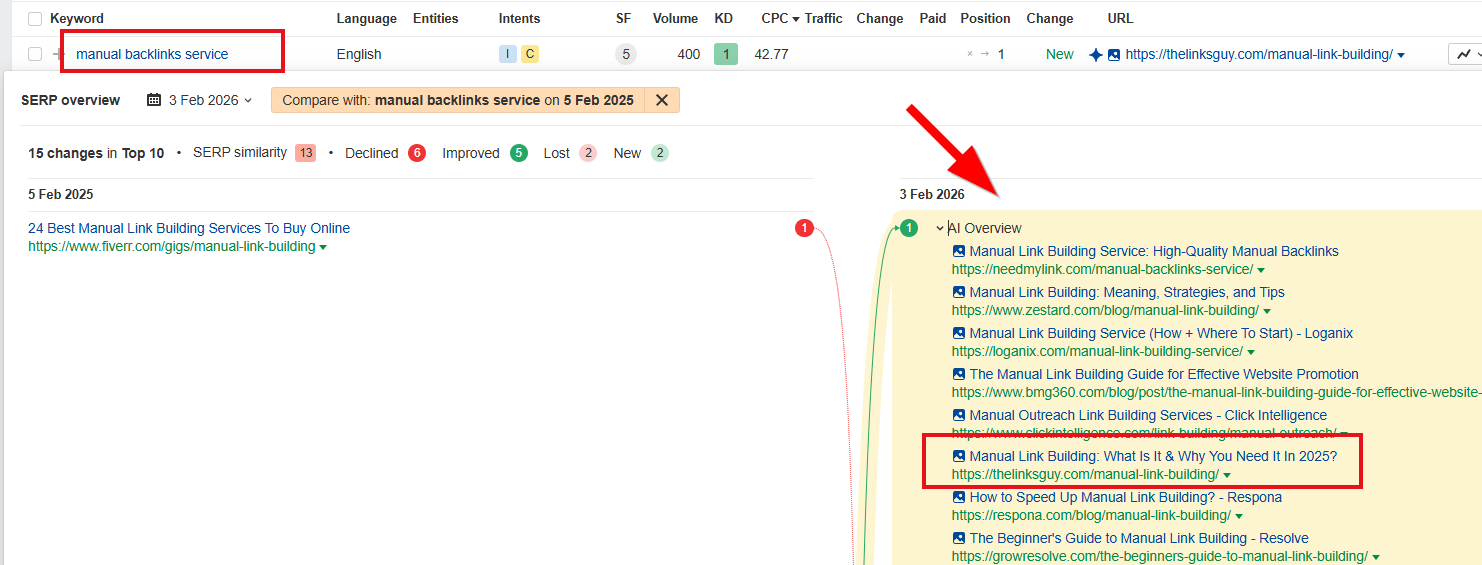

Most advice about AI visibility defaults to the same playbook: publish more, optimize harder, scale faster. Amit Raj, Founder and Link Building Consultant at The Links Guy, ran an experiment that challenges all of that.

Over the past year, he has published zero new content. Instead, he took six existing blog posts and refreshed them heavily with practitioner-only observations and specific examples. “After refreshing them, I started appearing in more AI Overviews for relevant US keywords.”

That’s what actually made the difference. Forget about the word count or publishing frequency and focus on making sure your content adds something that doesn’t already exist online.

He also noticed something interesting: “My ‘Manual Link Building’ page wasn’t refreshed and wasn’t ranking highly, but it still appeared in AI Overviews. It has strong internal linking through my mega menu and footer. Google may be treating it as a topical hub, which seems to compensate for recency.”

It’s a reminder that topical authority and domain authority, which AI systems understand through smart site structure and internal linking, can carry weight, even when individual pages haven’t been recently updated.

Katya Rozenoer, Co-founder of Blastra, tells the same story from a different angle. Starting with an unknown domain and zero reputation, her team skipped generic SaaS content entirely and went deep on their niche: B2B software directories. They built a knowledge hub around it, a directories database, guides for each directory, a catalog of software badges and awards.

The results came faster than expected. “We started this content work just about a month ago, and it’s already bringing results. Try asking an LLM how to earn Capterra badges, how Trustpilot recognition works, whether Gartner Peer Insights is worth it, or how to get ranked on G2—we will be listed among the sources, often above the original sources themselves.”

She says: “There are no hacks or easy recipes. We ensure our brand has the authority of directories that explain to LLMs what we do, and then our content speaks for itself.”

Did you know that adding statistics, expert citations, and quotes from relevant sources can boost your content’s visibility in AI-generated responses by up to 40%? Researchers from Princeton University and IIT Delhi confirmed this after testing nine generative engine optimization strategies against 10,000 real-world queries to find out what actually gets content cited by AI.

The reason these elements have such a strong impact comes down to how AI platforms are built. When you ask ChatGPT or Perplexity a question, the platform doesn’t just generate an answer from memory. It first retrieves relevant content from the web, evaluates it, and uses it to construct a response.

This process is called Retrieval-Augmented Generation, or RAG, and it’s what makes the quality of your content so important for AI visibility.

AI systems look for sources that appear credible and trustworthy. A named expert with a title, a company, and something specific to say is one of the clearest trust signals they can detect. Original statistics, proprietary research, and concrete numbers work the same way. They give AI something solid to anchor its answers to.

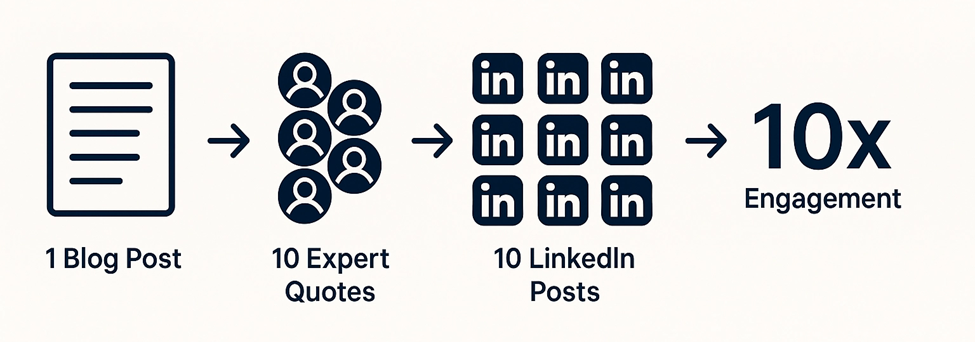

Expert quotes also help you reach a wider audience (if you make sharing easy). When you feature someone in your article, they have a reason to share it. Their name is on it, their insight is in it, and most contributors will post it on LinkedIn without you even having to ask. Tools like RoundupHero make this easier by turning each contribution into something contributors can quickly share.

So one article with ten expert quotes can turn into ten LinkedIn posts, each reaching a completely different audience, boosting citation odds, improving AI retrieval, and driving direct traffic back to your site.

Listicle outreach has become very competitive and expensive. Some of the listicles can go as high as $9,000, based on our experience. But it’s also very important to do it, and the prices actually signal how much the market values these placements.

When you launch a new SaaS product, nobody knows you exist. You can publish the best content in the world, but if the only place talking about you is your own website, AI systems have very little to work with.

That’s why third-party source coverage is so important. The more places that mention you, describe what you do, and associate you with the right keywords, the clearer the picture AI has of your brand. For new businesses, especially, it’s how you get on the map.

Iryna Kutnyak, Director of Operations at Quoleady, has seen this play out directly with clients: “AI answers tend to aggregate information from multiple sources, so when a product appears both on its own comparison pages and across independent listicles, it becomes easier for models to recognize and reference it.”

The results back this up. “On one client campaign, the comparison content cluster alone generated 30–40% of new organic traffic within a few months, with conversion rates two to three times higher than standard blog posts.” That’s the compounding effect of showing up in multiple places at once: better AI visibility, more organic traffic, consistent brand signals across the web, and higher-intent visitors when they land.

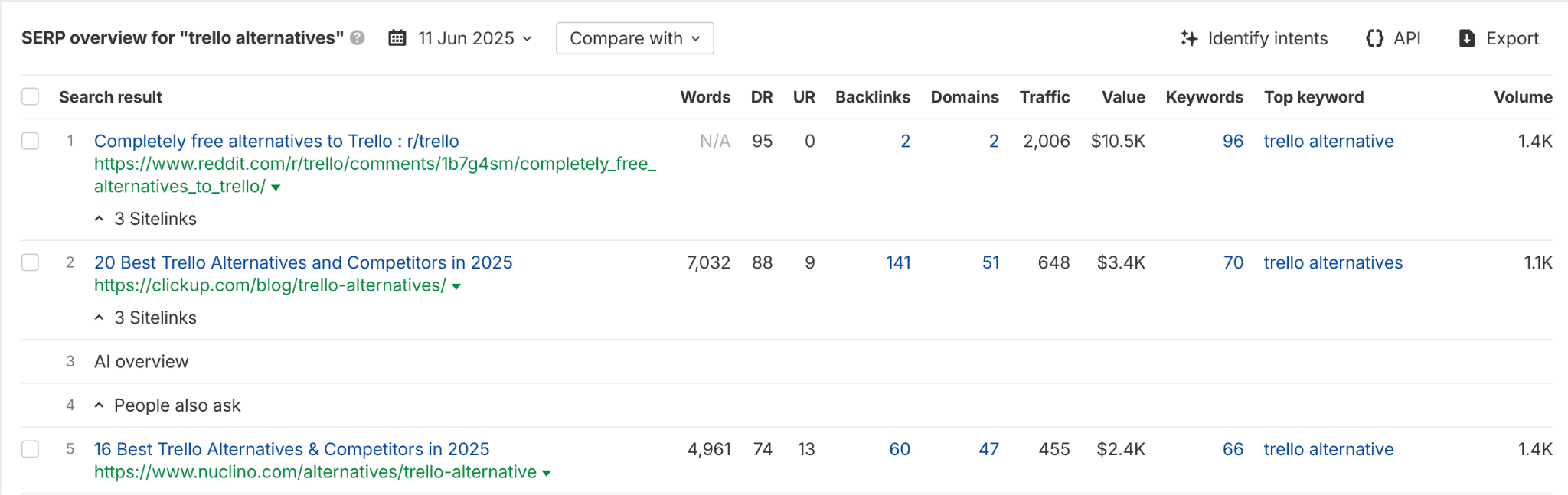

That said, not all placements deliver the same return. Evelina Milenova, Senior SEO Specialist at Hostinger, secured 67 listicle features for a single brand in late 2025 and tracked exactly which ones held up. One placement stood out: a listicle on a direct competitor’s site targeting the main comparison keyword for that category. It drove AI Overview appearances for four months! Most other placements: affiliate sites, guest posts, and industry news sites disappeared from AI citations within two weeks.

Her conclusion: “If your link-building resources are limited, exploring ways to get mentioned on competitor listicles will give better and longer-lasting results. LLMs have a strong recency bias, so if you stop producing new content or updating existing content, citations will sharply drop within a month.”

The same logic applies to Digital PR. Matthew Foster, Senior Digital PR Manager at Distinctly, found that not all media coverage carries equal weight when it comes to AI visibility. His team analyzed 6,780 data points across 452 UK publications to understand which ones AI crawlers can actually access, so they could target those when building media lists for clients.

It worked: “By prioritizing AI-open publications, we secured coverage in The Independent for a client, and that coverage began appearing in AI results for related keyphrases. Digital PR can influence AI visibility in two ways: directly, through coverage in publications AI crawlers can cite, and indirectly, by building the brand’s authority and expertise over time.”

The takeaway across all three examples is the same: third-party coverage matters, but where you appear matters just as much as how often.

Review platforms like G2, Capterra, and GetApp are just as important to get right.

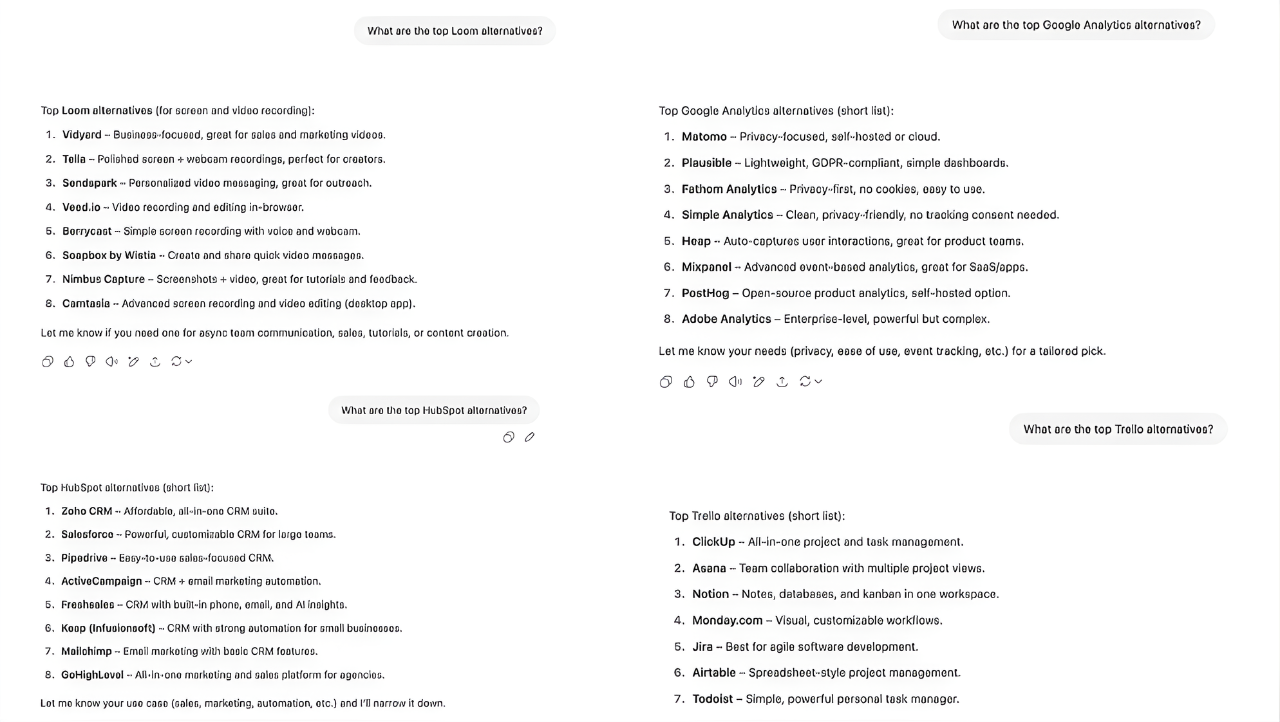

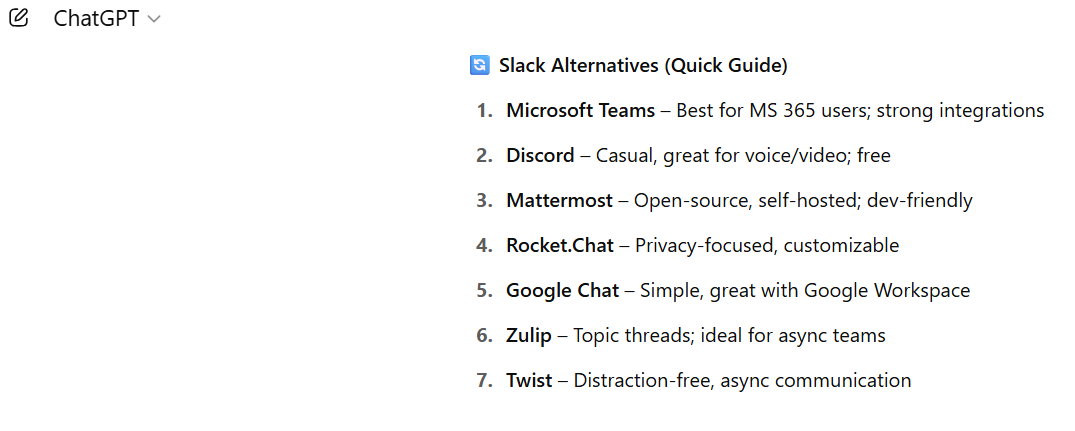

At Quoleady, we tested dozens of high-intent “alternatives” keywords to find out how G2 and Capterra Reviews Influence ChatGPT Rankings. Think “Trello alternatives,” “Zapier alternatives,” and “Slack alternatives”. Then we asked ChatGPT for its recommended tools for each and analyzed how much weight review platforms carried in shaping those responses.

The results were clear: 100% of the tools mentioned in ChatGPT answers had reviews on Capterra, and 99% had reviews on G2. These platforms act as a basic inclusion signal. If you’re not listed, you’re likely invisible to AI altogether.

That said, review presence alone won’t get you ranked. We also found that some tools with strong review profiles still ranked lower than others with fewer reviews and weaker ratings. G2 and Capterra help establish legitimacy in the eyes of AI, but they don’t determine placement. Being on review sites is just the starting point.

Be sure to check out our entire LLMO research.

Review platforms are not the only place where AI picks up signals about your brand. User-generated content (UGC) platforms like Reddit and Wikipedia matter too, and the data backs it up.

Reddit’s dominance in Google search grew significantly between 2023 and mid-2025, making it the second-most visible domain in Google Search in the US, according to Sistrix. But the picture has shifted since then. Reddit’s search visibility began declining after August 2025, dropping to the fourth-most visible domain in Google USA.

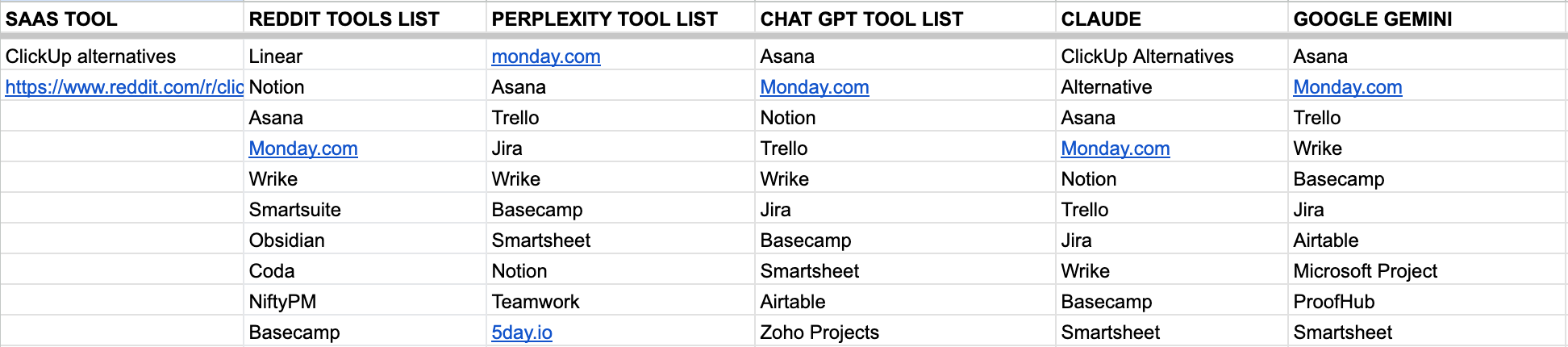

We ran an experiment to find out whether Reddit influences what AI tools recommend. We took dozens of high-intent “alternatives” keywords where Reddit ranks in Google’s top five search results, analyzed which tools Reddit was recommending, then asked ChatGPT, Claude, Perplexity, and Gemini the same questions and compared the results.

The overlap between Reddit SEO and LLM optimization was real but partial. Perplexity aligned with Reddit most often at 39%, followed by Gemini at 38%, ChatGPT at 33%, and Claude at 31%.

Well-known tools like Notion, Slack, and ClickUp showed up everywhere. But some tools that dominated Reddit threads never appeared in a single AI response, usually because their mentions were buried in posts Google couldn’t crawl or index properly.

Reddit does influence AI answers, but indirectly. If Reddit ranks for your keywords, being part of those conversations is worth it. But it’s just one piece of a much broader source coverage picture.

Rokas Stankevicius, Founder of AIclicks, has seen this firsthand: “The biggest insight from tracking brands: the gap between you and your top competitor is almost always source coverage, not content quality. AI engines pull info from Reddit threads, blog posts, directory listings. Once you know which sources aren’t mentioning you, the actions become obvious. Brands that follow this process consistently see 20–25% visibility gains within a few weeks of taking action.”

Andrii Shum, Head of SEO at SeoProfy, agrees that source coverage matters more than any single platform: “The key thing is trying to get your brand mentioned on the pages that are cited in AI answers, whether it’s Clutch or Reddit is secondary.”

He also flags something worth keeping in mind: tracking the impact of these efforts is genuinely difficult. In most cases, LLMs don’t provide a direct link—users copy the brand name and search for it separately. “We monitor prompts in Ahrefs Brand Radar, but the data is still far from accurate.”

Optimizing for AI visibility builds on the same fundamentals that have always driven good SEO: authoritative content creation, strong backlinks, clear site structure, and topical depth.

AI systems and traditional search engines are both trying to solve the same problem: find the most credible, relevant content chunks for a given query. They often reach the same answer.

Stanislav Farkas, SEO Specialist at FASTrategies, tested this directly. He compared the sources cited by Perplexity with Google Search results for the same query and found a strong overlap: most of the pages Perplexity cited were already ranking on page 1.

His conclusion is practical: “Ranking in top Google positions is still the biggest driver of appearing in AI answers. Focus on BOFU and money pages, build strong topical authority and brand presence. Use an 80:20 approach: a solid SEO foundation making up around 80%, complemented by AI-focused optimization for the remaining 20%. Once pages reach top positions and demonstrate real expertise, they start getting picked up more consistently in AI answers.”

The takeaway is simple. You don’t need to choose between traditional SEO and GEO. Chasing AI visibility in the AI era without a strong SEO foundation is building on sand. Get the fundamentals right first, and AI visibility tends to follow.

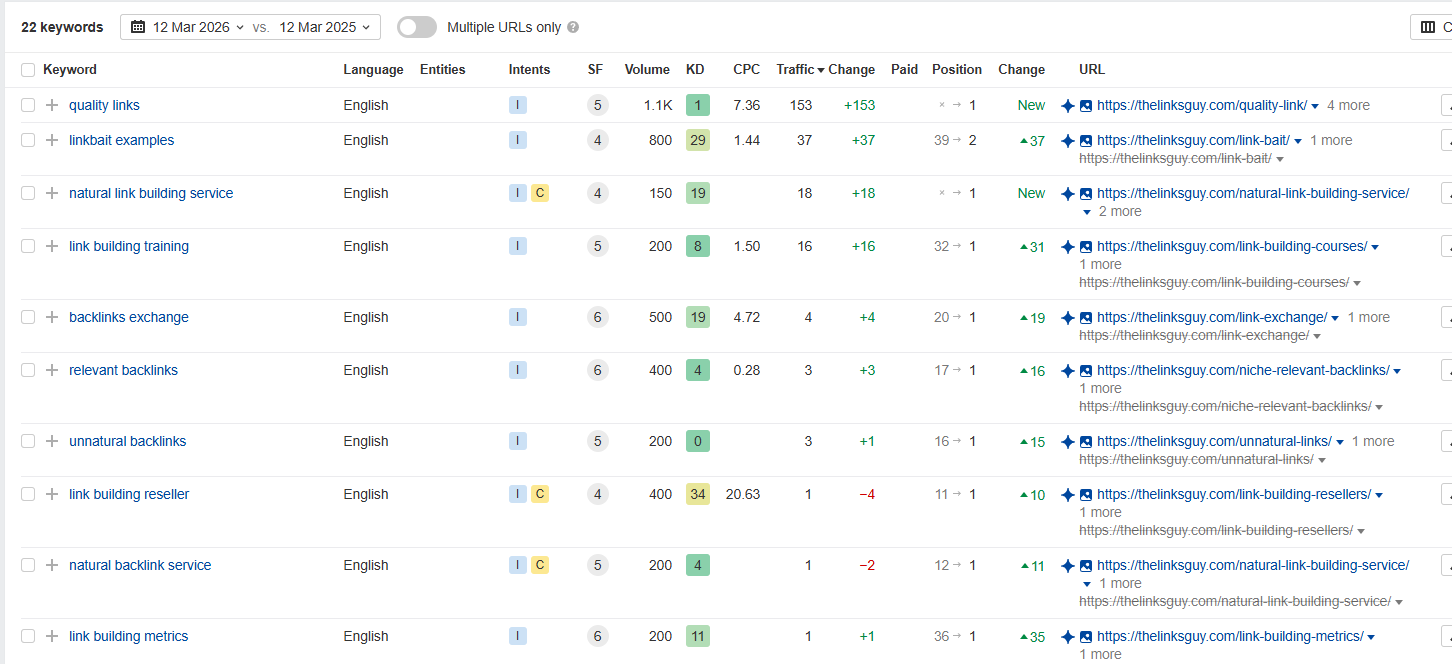

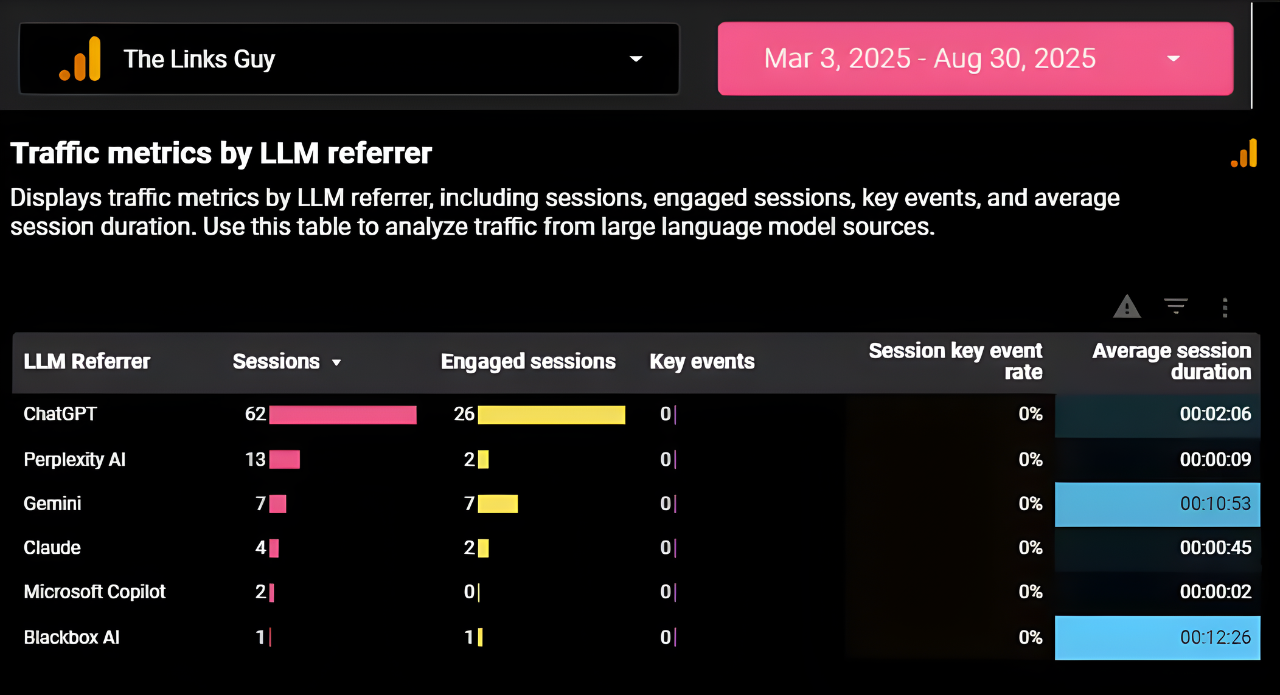

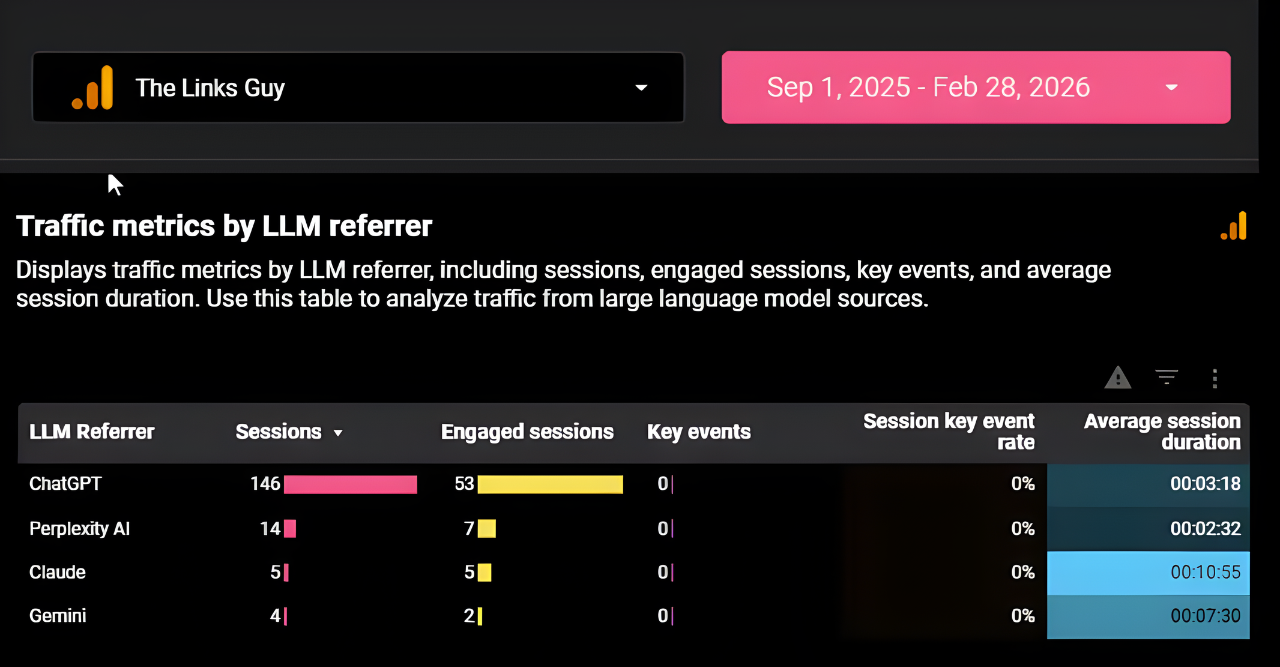

Amit Raj, Founder and Link Building Consultant at The Links Guy, saw the same pattern play out through his own link-building activity. Over nine months, he built around 46 backlinks to his site, seven of which carried branded anchors, including a placement on an SEO courses listicle.

The result: LLM traffic nearly doubled, going from 89 clicks in the previous six months to 169 in the following six.

In his own words: “Small sample, but hard to call a coincidence given how competitive my target keywords are.”

It’s another example pointing in the same direction. Stronger SEO signals: backlinks, brand mentions, and authoritative placements feed into the same credibility framework that AI systems rely on.

Matt Emgi, Founder at Emgi, ran a controlled experiment to dig deeper into how backlinks improve AI visibility.

He built content pages targeting high-intent BOFU keywords, then ran those queries through ChatGPT and AI Overviews before writing, aiming to capture the exact phrasing AI systems use to describe product features, category benefits, and model advantages.

He wrote the pages using that same vocabulary, then built backlinks with anchor text and surrounding context that matched the same language and framing. Within four weeks, the pages were being cited in both Google AI Overviews and ChatGPT.

His conclusion: “AI systems cite the page that says what they already think the answer is, in the words they already use. If you write in your own internal product vocabulary, you’re invisible. If you mirror the language the model is producing, you become an easy citation.”

That said, the overlap isn’t total. Do you have an article that gets zero traffic and ranks for no keywords? Most people do. Well, it can still be cited by ChatGPT.

Ahrefs research found that 76% of AI Overview citations come from pages ranking in Google’s top 10. But ChatGPT behaves differently: it cites content outside the top 10 far more often. So while Google rankings are still the strongest predictor of AI visibility overall, they’re not the only path in. Some content that never wins in search is quietly doing its job inside AI conversations.

As I’ve put it in a recent LinkedIn post: “Content doesn’t ‘not work.’ It works in different places now.”

Jared Carrizales, Fractional SEO Manager at JaredCarrizales.com, adds a technical dimension relevant to marketing teams and brand marketers working on AI visibility: “AI platforms need credibility and confirmation when considering what to include in answers. Authoritative mentions and high-quality backlinks drive credibility. The best place for confirmation, in my opinion, is relational structured data on the website itself.”

He tested this with a large manufacturer that had solid credibility and decent site architecture, but wasn’t showing up in any AI platform. After implementing schema markup across their materials pages and elaborating on the chemical makeup of their products, many of those pages began appearing on multiple AI platforms within days.

Rick Tousseyn, GEO & Content Strategist at OtterlyAI, adds an important nuance here. His own experiments found that schema markup’s impact on AI search is more limited than many assume: “Many AI search platforms strip the schema code when converting webpages to markdown. Research data shows that the impact of structured data is largely limited to the Google ecosystem: classic search, AI Mode, and AI Overviews, rather than broader LLM visibility.”

The pattern both experts point to is the same: structured data works as a confirmation layer, but only when external credibility signals are already in place.

LLM visibility is still new enough that many tactics get hyped before anyone has properly tested them. Practitioners who have been doing this long enough have started to notice what moves the needle in digital marketing and what just sounds good on LinkedIn.

Iryna Kutnyak at Quoleady was direct about one of the most common mistakes: “Publishing large volumes of generic ‘AI-optimized’ content rarely moves the needle unless the page already has strong structure, topical authority, and external mentions.” Writing specifically for large language models (LLMs), without the fundamentals in place, doesn’t work.

Stanislav Farkas at FASTrategies echoes this: “Shortcuts and overusing listicles don’t work as well as people think. Publishing large volumes of ‘best tools’ articles outside your niche and inserting your product everywhere might work short-term, but it’s not sustainable. AI systems increasingly favor relevance, authority, and consistency over volume.”

Andrii Shum at SeoProfy adds that classic guest posts, where the brand is barely mentioned, don’t help with LLM rankings either. And Sreeram Sharma at Angleout tested llms.txt files across multiple client sites and saw no improvement in visibility whatsoever.

The honest conclusion belongs to Ugljesa Djuric at ContentMonk: “There are no universal rules here. What works for one set of prompts in one industry might not work in another, or even for different prompts in the same industry. That’s why tracking daily data with an AEO tool matters. You need to see what’s actually happening and act on it.”

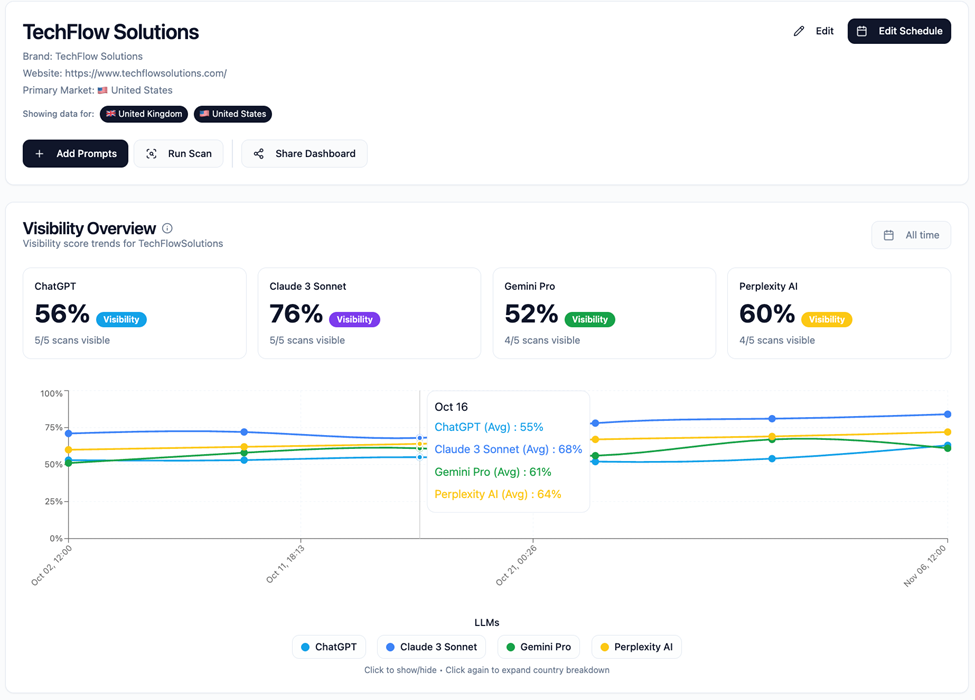

Ugljesa’s point holds true across every channel: if you’re not measuring what’s happening, you’re optimizing in the dark. With LLM visibility, that problem is even harder to ignore.

Unlike traditional SEO metrics like Google rankings or search volume, which you can check daily in Google Search Console, AI citations are harder to see. Your brand might be surfaced by AI assistants dozens of times a day or completely ignored. There is no way to know for sure without the right tools.

AI monitoring tools track where and how often your brand gets mentioned across major LLMs, the sentiment surrounding those mentions, which competitors are being cited instead of you, and how you can improve your AI visibility.

There are many LLM tracking tools on the market, such as Peec AI, Profound, Scrunch AI, and Otterly. They all track brand visibility across AI platforms in a similar way, but differ in features, depth, and pricing.

We built Allmond out of our own frustration. Working with SaaS brands on AI visibility, we kept running into the same problem: no easy way to show clients where they actually stood. Which prompts surfaced their content, which didn’t, and where the real gaps were.

So we built the tool we needed. Allmond is very easy to use, with a clean, no-fuss interface that shows you only what you need to act on. It tracks brand visibility across major LLMs, covers 67+ countries, and generates shareable reports in seconds.

There are no limits on prompts, projects, or clients. That keeps it affordable and accessible for teams of any size, whether you’re a small SaaS just getting started or an established product keeping tabs on competitors in AI-powered search.

Every practitioner we spoke to for this article came back to the same three pillars: original content, third-party presence, and LLM tracking. The tactics vary by industry, and as Ugljesa Djuric put it, there are no universal rules. But these fundamentals hold across the board. Here’s how to put them into action.

1. Audit where you currently stand. Before doing anything else, run your core keywords as prompts across ChatGPT, Claude, Perplexity, and Gemini. Note which competitors appear, which sources get cited, and where your brand is missing. This gives you a baseline to work from and makes the gaps immediately obvious.

2. Build content that adds something new. Generic, AI-optimized content published at volume doesn’t work. What works is content built on real experience, first-hand observations, and specific examples that don’t already exist online. Refresh existing posts with practitioner-level insight before creating new ones. Before writing, run your target keywords through ChatGPT and AI Overviews and mirror the exact phrasing they use. AI systems cite the page that already sounds like their answer.

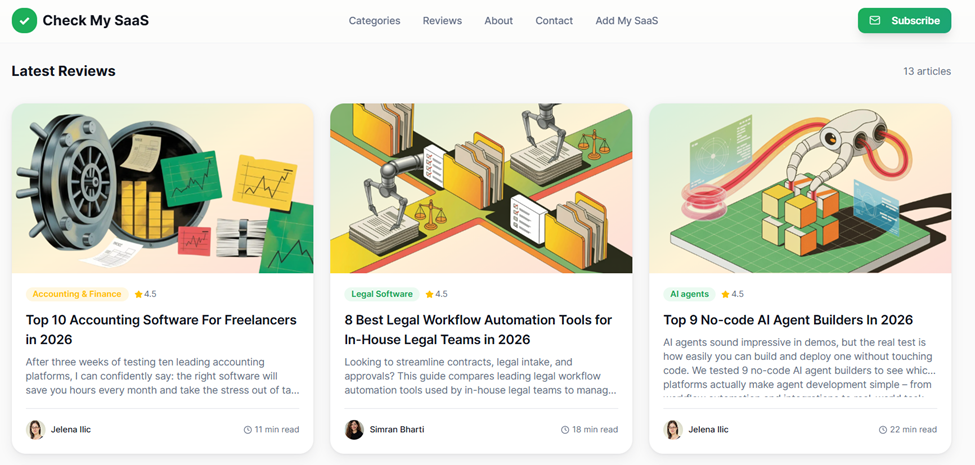

3. Prioritize BOFU content formats. Listicles, comparison articles, and alternatives pages are the formats AI engines cite most. They’re also what high-intent buyers read right before making a purchase decision. If you don’t have them, start there. And mind the placements: competitor listicles tend to last months in AI citations, while affiliate sites and guest posts often disappear within two weeks. For digital PR, prioritize publications that AI crawlers can actually access.

4. Get listed on review platforms. Our research found that 100% of the tools cited by ChatGPT for alternatives queries had reviews on Capterra, and 99% had reviews on G2. Being listed is the minimum requirement for AI inclusion. If you haven’t claimed and optimized your profiles, do it now.

Beyond the major platforms, independent review sites are increasingly cited as sources in AI-driven discovery. CheckMySaaS is one worth knowing: you can submit your tool for independent researchers to review based on real usage, covering genuine pros, cons, and day-to-day experience. That kind of unbiased coverage carries weight with AI systems.

5. Get featured in third-party listicles. Outreach to high-ranking listicles in your category is one of the highest-ROI activities to win LLM visibility, even if it’s competitive and sometimes expensive. Focus on getting featured on competitor sites and established players in your niche first, as those placements tend to last longer and carry more weight. Remember that source coverage matters more than any single platform.

To find the right opportunities and manage the process, ListicleManager helps you discover relevant listicles in your category, identify which ones are worth targeting, and run the outreach.

That way, your product gets in front of buyers who are already looking for a solution like yours, including those arriving via zero-click searches who never visit a traditional results page.

6. Be active where your buyers talk. Reddit still influences AI answers, particularly for tools with consistent, genuine community presence. If relevant subreddits exist for your category, participate authentically. Buried, link-free mentions don’t carry weight, but visible, active participation does.

7. Add expert quotes and original data to your content. Research from Princeton University and IIT Delhi found that adding statistics, citations, and expert quotes can boost AI visibility by up to 40%. Every original data point and named expert you include is an additional signal for AI systems to work with.

8. Implement structured data. Schema markup helps AI systems understand and confirm what your content is about, but only when the off-site credibility is already there. As Jared Carrizales put it, off-site factors are the trigger, and the website is the confirmation. That said, keep expectations realistic: research shows that schema markup’s impact is largely limited to the Google ecosystem and has less influence on broader LLM visibility.

9. Don’t abandon SEO strategy. Google rankings and AI citations overlap more than most people expect. A strong SEO foundation, topical authority, quality backlinks, and well-structured pages remain the most reliable path to AI visibility. Use an 80/20 approach: solid SEO fundamentals first, with answer engine optimization layered on top.

10. Measure LLM visibility and adjust. Use an AI tracking tool to monitor brand visibility across AI models, track which prompts surface your content, and identify where competitors are showing up instead of you. Platforms like Peec AI, Profound, Otterly, and Allmond help with this—find the one that fits your team’s size and workflow.

If you’d rather have experts handle this, Quoleady works with SaaS brands to build content, secure placements, and systematically improve LLM visibility. Everything covered in this article is what we do every day. Schedule a call and let’s talk.

Focus on three things: publish original, experience-based content in formats AI search engines favor (listicles, comparisons, alternatives pages); build third-party presence through review platforms, listicle features, digital PR, and community mentions; and earn quality backlinks and brand mentions on trusted sites. The more places that reference your brand in the right context, the more likely AI systems are to cite you.

Yes, significantly. Research shows a strong overlap between pages that rank on Google’s first page and pages cited by AI tools. That said, informational queries tend to show less overlap. ChatGPT in particular cites content outside the top 10 more often than Google’s AI Overviews do. A strong SEO foundation remains the most reliable path to AI visibility, but it’s not the only one.

You need an LLM monitoring tool. These tools run prompts across major AI platforms and show you where your brand appears in AI-driven search, where competitors are cited instead, and which topics you’re missing entirely. Tools like Allmond make this straightforward: you can track visibility across ChatGPT, Gemini, Claude and Perplexity, spot gaps, and generate shareable reports without needing a dedicated analyst.

Let us know what you are looking to accomplish.

We’ll give you a clear direction of how to get there.

All consultations are free 🔥